DataRepresentation

5. データ表現

5.1. 概要

コンピュータは情報によっていろいろな仕事をする機械です.コンピュータによって,あなたは文書,画像,動画,音,表計算,データベースの中の情報を見たり,聞いたり,作ったり,編集したりすることができます.また,現実には存在せず,コンピュータのメモリとスクリーンに表示された情報によって成り立つシミュレートされた世界の中でゲームを遊ぶことができます.さらに数値情報を計算したり,ネットワークを通じて情報を送ったり,受け取ったりできます.これらすべての基礎として,コンピュータは情報をメモリ内に納めたり,ディスクに保存したり,ネットワークで送ったりするため,何らかの方法で情報を表現しなければいけません.

コンピュータを作りやすく,信頼性があるようにするために,すべてのものがたった2つの値で表現されます.2つの値は0と1で表現されているように見えていると思いますが,コンピュータの中では2つの状態をもつ何かで表現されています.たとえば,メモリの中で0と1を憶えるためには,電圧の高い・低いが用いられます.磁気ディスクの中では驚くことにディスク上の小さなスポットの磁気がNかSかで記憶されています.

私たちは通常,紙の上でコンピュータ上で憶えられる情報を表すのに一方の状態を"0",もう一方を"1"で表します.もしコンピュータのメモリのある部分の電圧が「低低高低高高高高低高低低」だった場合,私たちは「低」を"0",「高」を"1"に割り当てることで,"001011110100"という数字の列で表すことができます.この表現は広く使われていて,データが"0"と"1"の列で表されていることをよく耳にすると思いますが,コンピュータは数をそのまま憶えることはできません.それらはあくまで電圧の高低であったり,磁極のNとSであったり,色の明るい・暗いなどのことなのです.

用語集

2つの数字,0と1を使うことは,非常に一般的なので,もっともよく知られているコンピュータ用語の由来になっています.2つの数字のみで数を表す体系ことを二進法と呼びます.英語で二進法の数を表す"binary digit"の最初の2文字と最後の1文字をとって短縮して作られた言葉が"bit"(ビット)で,2つの値をとる1桁の数を表します.

あなたが保存したすべてのファイル,あなたが撮ったすべての写真,すべてのダウンロードしたものは全部,ビットで成り立っています.計算機科学者はビットそのものを読むのに多くの時間は使いませんが,どのようにそれらを貯えておくかが本当に重要であることを知っています.なぜなら,それがデータを貯えておくために必要な領域の大きさや,貯えられているものの質に影響を与えるからです.あなたは「24ビットカラー」「128ビット暗号化」「32ビットのIPv4アドレス」「8ビットのASCII」といった言葉を見かけたことがあるでしょう.ビットの役割を理解することで,質の高い色,破られにくい秘密の符号,世界中のすべて機器に個別のIDを付ける,普段使われる英語のアルファベットより多くの文字を使うテキスト,などを実現するためにどれだけの領域が必要かがわかるようになります.

この章では異なった種類の情報をビットのパターンとしてコンピュータで符号化するためのいろいろな方法と,コンピュータ上でのコストと質に与える,それらの符号化の影響について紹介します.

5.2. はじめに

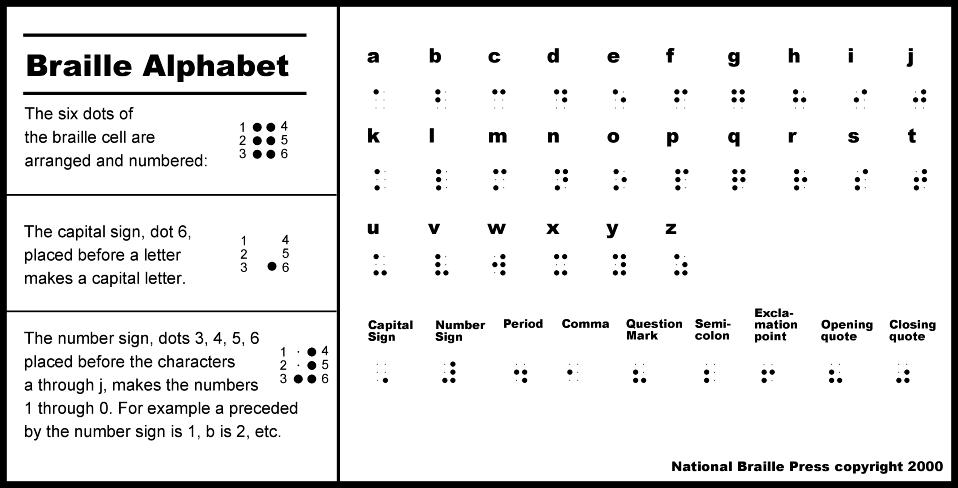

200年以上前,15歳のフランスの少年が触れることで文章が読めるように紙の上の平らな部分と盛り上がった点の組み合わせで文章を表す体系を発明しました.この体系を使うと,文章を視ることなくなく,比較的早く,間違いなく「読める」ので,視力に障害を持つ人々の間で広く使われるようになりました.ルイ・ブライユの作った体系はデータを「2進」表現した初期の例です.そこには「盛り上がり」と「平ら」という2つの記号しかありませんが,それにもかかわらず,それを組み合わせることにより事典や文学作品を表現することができるのです.ブライユの方法で表された文字−点字はそれぞれの文字が6つの点の集まりで表されます.それぞれの点は「盛りがっている」か「盛り上がってない」かで表され,異なった数や文字は異なった点のパターンによって表されます.

点字の6つの点で,いくつの異なったパターンができるか計算してみましょう.この節の教材では,点字の盛り上がった点を表す代わりに,小さな6つの円で長方形を作り,盛り上がっている点は円を塗りつぶし,そうでない点は塗りつぶさないということで表現することにします.

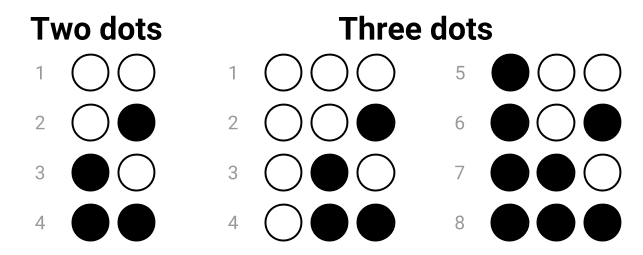

もし点字が2つの点しか使わないのであれば,表せるのは4つのパターンで,以下の組み合わせになります.

3つの点ならば,8つのパターンが表現でき,以下の組み合わせになります.

3つの点で表されるパータンが2つの点で表されるパータンの2倍になっていることに気がついたでしょうか.実は,1つ天を追加する毎に2倍のパターンが表現できるのです(なぜでしょう?).ですので,4つの点ならば16のパターン,5つの点ならば32のパターン,6つの点ならば64のパターンが表現できます.

したがって,点字は64のパターンを表すことができます.これは,アルファベットのすべての文字に加え,数字や句読点などの他の記号を表すのに十分なものです.

点字はまた,2進表現がなぜよく用いられるのかを示しています.「平ら」「半分盛り上がっている」「完全に盛り上がっている」という3種類の点を使うことは可能です.熟練した点字を読み手ならばこれらを区別し,それぞれの点がどの値とるのかが分かます.こうすると,4つの点で64パターンを表現できるようになります.しかし,点を作るのにより正確な道具が必要になり,点字を読む人はより正確に点を読み取る必要があるという問題が生じます.また,もし紙がたとえ少しでも押しつぶされていれば,その情報は読めなくなってしまいます.

デジタル機器はほぼ同じ理由で,ほとんどの場合,2つの値(2進)を使用します.コンピュータのディスクやメモリは,2つの両極に分かれた値(例えば電圧の高低)だけを区別すればいいようにすれば,電圧の微妙な違いになどによって細かく分けられた値を区別するよりも安価でサイズを小さくすることができます.計算も2進の値を使う方が簡単になります.なぜなら,2つの数(0と1)しか持たないのであれば,必要な規則は多くなく,1桁の数の足し算は,0+0, 0+1, 1+0, 1+1を計算する回路があれば計算できます.一般的な10進の計算をする場合,1桁の10進の数の足し算にどれだけの組み合わせがあるか計算してみてください.

実際,コンピュータ上の各種のファイルはたくさんの2進の数で表現されています.テキスト,写真,表計算,Webページ,歌などすべてが,たった2つの値を使って保存されているのです.あなたが実行するプログラム(アプリケーション)でさえ,2進表現を用いており,ときにコンピュータで実行するプログラムは「バイナリファイル」と呼ばれます.コンピュータ上のすべてのファイルが2進(バイナリ)なのでちょっと変なのですが.

5.3. ビットでテキストを表現する

前の節で点字の6つの点を使うことによって64のパターンが表現できることを見てきました.あなたのキーボードを使ってテキストエディタでいくつの大文字,小文字,数字,記号を入力できるか数えてみましょう.(数字キーと共有して置かれた記号や脇の方にある句読点のための記号も忘れないようにしてください.)これらの総称して「文字(キャラクタ)」と呼びます.たとえば,'a', 'D', '1', 'h', '6', '*', ']', '~' はキャラクタです.

(64のパターンを表現できる)6つの点はすべてのキャラクタを表現するのに十分だったでしょうか?正しく数えられていたならば,64より多くのキャラクタがあることがわかったと思います.あなたがキーボードで数えたすべてのキャラクタを表現するためには何ビットが必要なのでしょうか?

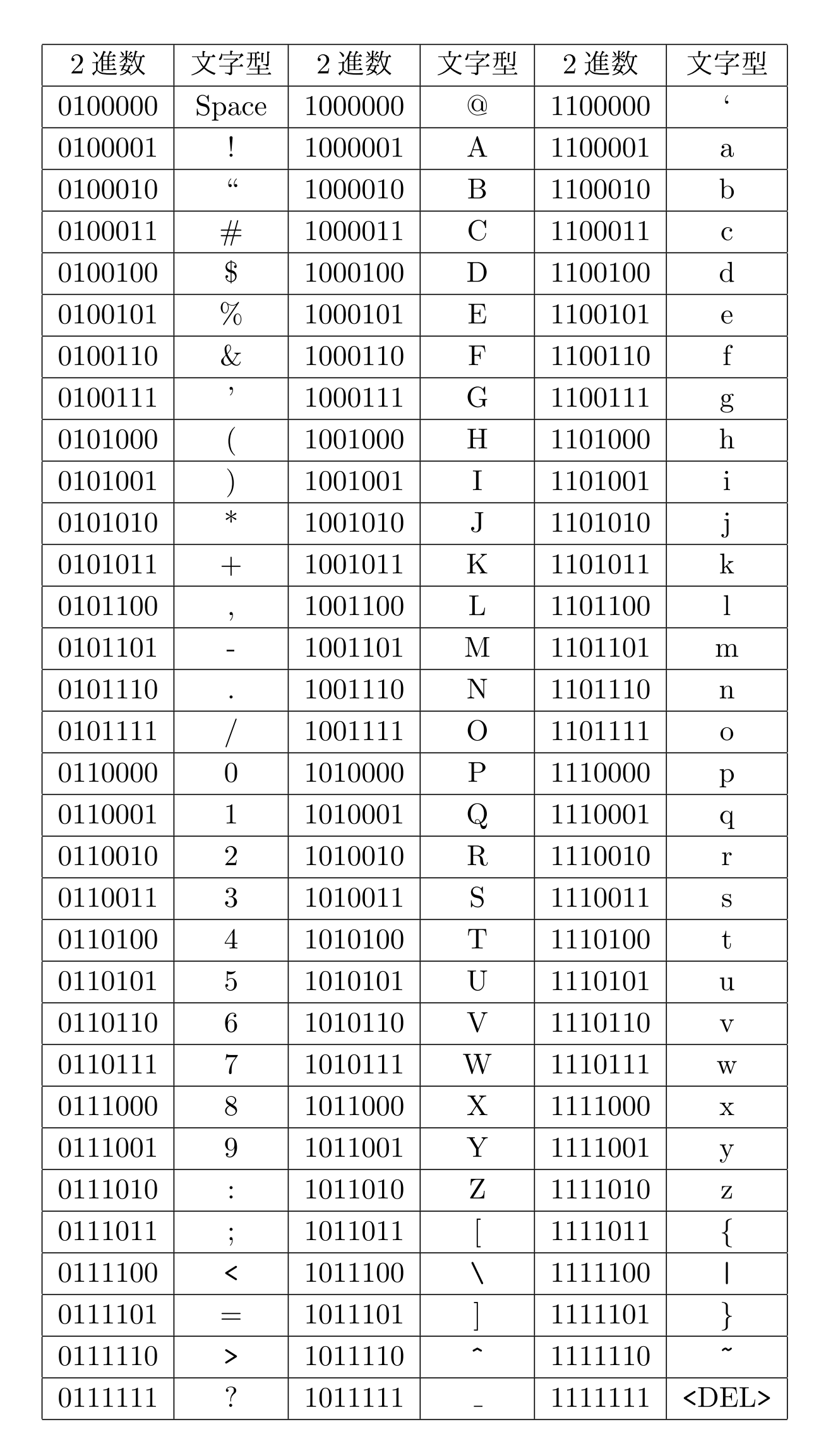

It turns out that 7 dots is enough as this gives 128 possible patterns, and this is exactly what the ASCII code for text does. ASCII is one of the main systems that computers use to represent English text. It was first used commercially in 1963, and despite the big changes in computers since then, it is still the basis of how English text is stored on computers.

ASCII assigned a different pattern of bits to each of the characters, along with a few other “control” characters that you don’t need to worry about yet. For reasons that we will get to later, each pattern in ASCII is usually stored in 8 bits, with one wasted bit, rather than 7 bits. However, the first bit in each 8-bit pattern is a 0, meaning there are still only 128 possible patterns.

Below is a table that shows the patterns of bits that ASCII uses for each of the characters.

For example, the letter c (lower-case) in the table has the pattern “01100011” (the 0 at the front is just extra padding to make it up to 8 bits). The letter o has the pattern “01101111”. You could write a word out using this code, and if you give it to someone else, they should be able to decode it exactly.

Computers can represent pieces of text with sequences of these patterns, much like Braille does. For example, the word “computers” (all lower-case) would be 01100011 01101111 01101101 01110000 01110101 01110100 01100101 01110010 01110011.

How would you represent the word “science” in ASCII? What about “Wellington” (note that it starts with an upper-case “W”)? How would you represent “358” in ASCII (it is three characters, even though it looks like a number)? What about the sentence “Hello, how are you?” (look for the comma, question mark, and space characters in the ASCII table).

Curiosity

If you only wanted to represent the 26 letters of the alphabet, and weren’t worried about upper-case or lower-case, you could get away with using just 5 bits, which allows for up to 32 different patterns. Have a look at the last 5 bits of each of the 26 lower-case letters in ASCII. Do any of the 26 lower-case letters have the same last 5 bits? Have a look at the 26 upper-case letters. Do any of the upper-case letters have the same last 5 bits?

You may have noticed that none of the lower-case letters have the same last 5 bits, but they do have the same last 5 bits as their corresponding upper-case letter!

For example, a = 1100001 and A = 1000001, they both have 00001 as their last 5 bits. As another example, s = 1110011 and S = 1010011, they both have 10011 as their last 5 bits.

An easy way to allocate patterns in this 5 bit system would be to just use the last 5 bits for each character in the ASCII table. Therefore A would be 00001, b would be 00010, c would be 00011, etc.

The word “water” would be 10111 00001 10111 10100 10010

There’s an activity that uses five-bit text codes hidden in music here.

English text can easily be represented using ASCII, but what about languages such as Chinese where there are thousands of different characters? The 128 patterns aren’t nearly enough to represent such languages! That’s where codes that use more than 7 bits become important, and in a later section we’ll look at these, but first we need to explore binary number representation and develop some efficient ways to talk about longer binary numbers.

Curiosity

The name “ASCII” stands for “American Standard Code for Information Interchange”, which was a particular way of assigning bit patterns to the characters on a typewriter. The ASCII system even includes “characters” for ringing a bell (useful for getting attention on old telegraph systems), deleting the previous character (kind of an early “undo”), and “end of transmission” (to let the receiver know that the message was finished). These days those characters are rarely used, but the codes for them still exist (they are the missing patterns in the table above). Nowadays ASCII has been surplanted by a code called “UTF-8”, which happens to be the same as ASCII if the extra left-hand bit is a 0, but opens up a huge range of characters if the left-hand bit is a 1.

There are several other codes that were popular before ASCII, including the Baudot code and EBCDIC.**5.4. Representing numbers with bits [#s51d6bd3]

The number system that humans normally use is in base 10 (also known as decimal). It’s worth revising quickly, because binary numbers use the same ideas as decimal numbers, just with fewer digits! In decimal, the value of each digit in a number depends on its place in the number. For example, in the amount $123, the 3 represents $3, whereas the 1 represents $100. Each place value in a number is worth 10 times more than the place value to its right, i.e. there are the “ones”, the “tens”, the “hundreds”, the “thousands” the “ten thousands”, the “hundred thousands”, the “millions”, etc. Also, there are 10 different digits (0,1,2,3,4,5,6,7,8,9) that can be at each of those place values.

If you were only able to use one digit to represent a number, then the largest number would be 9. After that, you need a second digit, which goes to the left, giving you the next ten numbers (10, 11, 12... 19). It’s because we have 10 digits that each one is worth 10 times as much as the one it its right.

You may have encountered different ways of expressing numbers using “expanded form”. For example, if you want to write the number 90328 in expanded form you might have written it as:

90328 = 90000 + 300 + 20 + 8

A more sophisticated way of writing it is:

90328 = (9 x 10000) + (0 x 1000) + (3 x 100) + (2 x 10) + (8 x 1)

If you’ve learnt about exponents, you could write it as 90328 = (9 x 104) + (0 x 103) + (3 x 102) + (2 x 101) + (8 x 100)

Remember that any number to the power of 0 is 1. i.e. the 8 x 100 is 8, because the 100 is 1.

The key ideas to notice from this are that the digit on the right (such as the 8 in 90328) is the one that’s worth the least, and that because we have 10 digits, each place is worth 10 times as much as the one to the right (e.g. the 2 in 90328 is the number of tens, the 3 is the number of 100s, and so on). Exactly the same happens with binary numbers.

5.4.1. Binary numbers

As discussed earlier, computers can only store information using bits, which only have 2 possible states. This means that they cannot represent base 10 numbers using digits 0 to 9, the way we write down numbers in decimal; instead, they use a base 2 number system, also called binary.

Curiosity

The base 10 (decimal) system is sometimes called denary, which is more consistent with the the name binary for the base 2 system. The word “denary” also refers to the Roman denarius coin, which was worth ten asses (an “as” was a copper or bronze coin).

Because binary is base 2, there are only 2 possible digits (0 and 1), as opposed to the 10 in our standard number system, and each place value is 2 times bigger than the one to its right (in contrast to our base 10 number system where each place is 10 times bigger).

The interactive below illustrates how this binary number system represents decimal numbers. Have a play around with it to see what patterns you can see. The decimal (base 10) representation for the binary number currently shown is given by the interactive on the far right.

★コンテンツは準備中です★

To ensure you are understanding correctly how to use the interactive, verify that when you enter the binary number 101101 it shows that the decimal representation is 45, that when you enter 100000 it shows that the decimal representation is 32, and when you enter 001010 it shows the decimal representation is 10.

You should try using the interactive to convert a decimal number to binary. For example, choose a number less that 61 (perhaps your house number, a friend’s age, or the day of the month you were born on), set all the binary digits to zero, and then start with the left-most digit (32), trying out if it should be zero or one. See if you can find a method for converting the number without too much trial and error.

Can you figure out the binary representation for 23 without using the interactive? What about 4, 0, and 32? Check all your answers using the interactive to verify they are correct.

What is the largest number you can make with this binary interactive? What is the smallest? Is there any integer value in between the biggest and the smallest that you can’t make?

You have probably noticed from the interactive that when set to 1, the leftmost bit (the “most significant bit”) adds 32 to the total, the next adds 16, and then the rest add 8, 4, 2, and 1 respectively. When set to 0, a bit does not add anything to the total. So the idea is to make numbers by adding some or all of 32, 16, 8, 4, 2, and 1 together, and each of those numbers can only be included once.

Rather than just using trial and error to figure out what a decimal number is in binary, could you figure out a systematic approach? Have a look at what 100000 is in binary. What about 011111? Is it possible to make a number over 32 if the most significant bit is set to a 0? Why? And what about 001000 and 000111? Can you see a pattern that would lead to a systematic way of converting decimal numbers to binary? Hint: start with deciding the leftmost bit, and then work along to the right, bit by bit.

So what happens if we have fewer than 6 bits? For example, with 5 bits, the place values would be 16, 8, 4, 2 and 1, so the largest value is 11111 in binary, or 31 in decimal. What’s the largest value you can store with 4 bits? 3 bits?

What would happen if we have 7 bits instead of 6? The seventh bit would have a value of 64, and it would be possible to store numbers up to 127.

Extra for Experts

Can you figure out a systematic approach to counting in binary? i.e. start with the number 0, then increment it to 1, then 2, then 3, etc, all the way up to the highest number that can be made with the 6 bits. Try counting from 0 to 16, and see if you can detect a pattern. Hint: Think about how you add 1 to a number in base 10. e.g. how do you work out 7 + 1, 38 + 1, 19 + 1, 99 + 1, 230899999 + 1, etc? Can you apply that same idea to binary?

Using your new knowledge of the binary number system, can you figure out a way to count to higher than 10 using your 10 fingers? What is the highest number you can represent using your 10 fingers? What if you included your 10 toes as well (so you have 20 fingers and toes to count with).

An important concept with binary numbers is the range of values that can be represented using a given number of bits. One bit on its own might not seem very useful, but it’s enough to store things like the state of a checkbox (checked or not checked). When we have 8 bits the binary numbers start to get useful — they can represent values from 0 to 255, so it is enough to store someone’s age, the day of the month, and so on.

Jargon Buster

Groups of 8 bits are so useful that they have their own name: a byte. Computer memory and disk space is usually divided up into bytes, and bigger values are stored using more than one byte. For example, two bytes (16 bits) are enough to store numbers from 0 to 65,535. Four bytes (32 bits) can store numbers up to 42,94,967,295. You can check these numbers by working out the place values of the bits. Every bit that’s added will double the range of the number.

Curiosity

Candles on birthday cakes use the base 1 numbering system, where each place is worth 1 times the one to its right(!) For example, the number 3 is 111, and 10 is 1111111111. This can cause problems as you get older — if you’ve ever seen a cake with 100 candles on it, you’ll be aware that it’s a serious fire hazard.

Luckily it’s possible to use binary notation for birthday candles — each candle is either lit or not lit. For example, if you are 18, the binary notation is 10010, and you need 5 candles (with only two of them lit).

There’s a video on using binary notation for counting up to 1023 on your hands, as well as using it for birthday cakes>[[EBCDIC]].

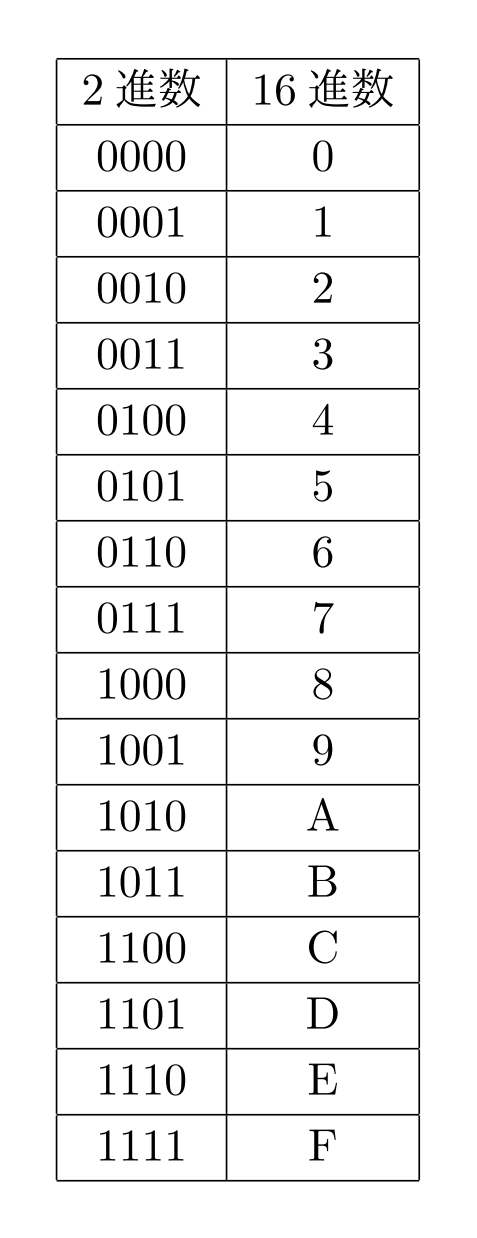

5.4.2 Shorthand for binary numbers

Most of the time binary numbers are stored electronically, and we don’t need to worry about making sense of them. But sometimes it’s useful to be able to write down and share numbers, such as the unique identifier assigned to each digital device (MAC address), or the colours specified in an HTML page.

Writing out long binary numbers is tedious — for example, suppose you need to copy down the 16-bit number 0101001110010001. A widely used shortcut is to break the number up into 4-bit groups (in this case, 0101 0011 1001 0001), and then write down the digit that each group represents (giving 5391). There’s just one small problem: each group of 4 bits can go up to 1111, which is 15, and the digits only go up to 9.

The solution is simple: we introduce symbols for the digits for 1010 (10) to 1111 (15), which are just the letters A to F. So, for example, the 16-bit binary number 1011 1000 1110 0001 can be written more concisely as B8E1. The “B” represents the binary 1011, which is the decimal number 11, and the E represents binary 1110, which is decimal 14.

Because we now have 16 digits, this representation is called hexadecimal (or hex for short). Converting between binary and hexadecimal is very simple, and that’s why hexadecimal is a very common way of writing down large binary numbers.

Here’s a full table of all the 4-bit numbers and their hexadecimal digit equivalent:

For example, the largest 8-bit binary number is 11111111. This can be written as FF in hexadecimal. Both of those representations mean 255 in our conventional decimal system (you can check that by converting the binary number to decimal).

The largest 16 bit binary number is 1111111111111111, or FFFF in hexadecimal. Both of these represent 65535 in decimal.

The hexadecimal system is also known as base 16. The following interactive converts hexadecimal numbers to decimal (base 10), which provides another way of thinking about them. But don’t forget that the main point is that hexadecimal is an easy shorthand for binary representation.

★コンテンツは準備中です★

Which notation you use will depend on the situation; binary numbers represent what is actually stored, but can be confusing to read and write; hexadecimal numbers are a good shorthand; and decimal numbers are used if you’re trying to understand the meaning of the number. All three get used in computer science.

5.4.3 How to binary numbers

The length of a binary number determines the range of values it can represent. Often on computers we are dealing with text, images and sound rather than numbers, but they do appear in quite a few places, and the accuracy with which they are represented can affect what we can do on a computer.

For example, numbers in spreadsheets usually have a finite precision. Try putting the formula "=1/3" into a spreadsheet, and have it represented with maximum accuracy. How many decimal places does it store? This will be dictated by the number of binary digits that the spreadsheet is storing.

Many programming languages allow the programmer to specify the number of bits used to represent each variable (e.g. in the C language a "short int" is 16 bits or more, and a "long int" is at least 32 bits); if you are working with a language then they could investigate limits on how numbers are represented. Note that some languages, including Python, seamlessly changes the size of the representation of an integer if it gets too large, so it's harder to explore these issues in Python.

Another situation where different numbers of bits in a representation is important is IP (Internet Protocol) and MAC (media access control) addresses for devices; the recent change from IPv4 to IPv6 was driven by the number of devices you could represent, and if you are interested in networks could explore the number of bits used for an address, and how many possible devices could exist before we run out of numbers.

5.5. Representing images with bits

Warning

This section assumes that you understand binary numbers. If you are confused by binary numbers still, you should go back to the binary numbers section and work through the material there again until you understand it. The first part of this section is possible to understand without understanding binary numbers, although in order to actually use the material for assessment purposes, you will need to understand binary numbers, as the key idea is representing colours using bits, and the bits in colours are decided based on numbers.

In school or art class you may have mixed different colours of paint or dye together in order to make new colours. This was probably very helpful if the exact colour you wanted was not present in your palette, in addition to just being fun to experiment with! When mixing paints, red and blue would give purple. If you mixed yellow and blue, you would get green. If you mixed red and yellow, you would get orange. If you mixed an even amount of the 3 primary colours; blue, red, and yellow together, you should get black, although often it would be a murky brown. By mixing together various amounts of the three primary colours, along with white and black, you can make many different colours.

Actually, while the colours blue, red and yellow are commonly used in art classes, the very similar primary colours that work better for printing are cyan, magenta and yellow (CMY), which are commonly found in computer printers as well as printing presses. This kind of mixing is called “subtractive mixing”, because it starts with a white canvas or paper, and subtracts colour from it.

Of course, a computer screen or printout doesn’t have just one colour on it — it has millions of small pixels, each of which has a particular colour.

Jargon Buster

The word pixel is short for “picture element”. On computer screens and printers an image is created by a grid of pixels, each one set to the required colour. A pixel is typically a fraction of a millimeter across, and images can be made up of millions of pixels (one megapixel is a million pixels).

Computer screens and related devices also rely on mixing colours, except they go about it in quite a different way — they use a different set of primary colours, because they are additive, starting with a black screen and add colour to it. For additive colour on computers, the colours red, green and blue (RGB) are used. Each pixel on a screen has 3 tiny lights; one red, one green, and one blue. By increasing and decreasing the amount of light coming out of each of these 3 lights, all the different colours can be made.

You can try additive colours in the following interactive; try different combinations of each slider. How do you generate yellow? What happens if they are all at zero? All at full value (255)? Halfway? What happens if one colour is at full, and the other two are at halfway? How do you get shades of purple, yellow, orange, and pink? What happens when you have the same amount of each colour? How do you get black? How do you get white?

The key idea is that you can specify the colour of a pixel using three numbers. In the above example, each number is from 0 to 255. With 256 possible values for each of the three components, that gives 256 x 256 x 256 = 16,777,216 possible colours, which is more than the human eye can detect. In other words, using just three numbers, you can specify pretty much any colour you want — and probably a lot that you don’t.

Curiosity

The human eye has millions of light sensors in it, and the ones that detect colour are called “cones”. There are three different kinds of cones, which detect red, blue, and green light respectively. Colours are perceived by the amount of red, blue, and green light in them. Computer screen pixels take advantage of this by releasing the amounts of red, blue, and green light that will be perceived as the desired colour by your eyes. So when you see “purple”, it’s really the red and blue cones in your eyes being stimulated, and your brain converts that to a perceived colour.

For more information about RGB displays, see RGB on Wikipedia; for more information about the eye sensing the three colours, see Cone cell and trichromacy on Wikipedia.

Of course, you usually have more than one colour displayed on a screen. In fact, even the smallest computer screens have millions of pixels on them, and the computer needs to represent a colour for each one of those pixels. These days photographs are measured in megapixels (millions of pixels). To store the image, your computer is storing a colour for every one of those pixels, and each of those could be using the three numbers above. So a 2 megapixel photo, in its simplest form, needs 6 million numbers to be recorded to represent it accurately.

★コンテンツは準備中です★

5.5.1. Representing high quality images using bits

So now, how can computers represent each possible colour using bits? You may have noticed in the above interactive that for each of red, green, and blue, there are 256 different positions the slider can be in (don’t forget to include setting the slider to 0). From the numbers section, you may remember that to get 256 different possibilities, you need 8 bits. So for example, to represent the current value of the red slider, you would need 8 bits (28 = 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 = 256).

Because there are three primary colours, each of which has 256 different possible values, we need 24 bits in order to have enough possible bit patterns to represent all the possible colours that this scheme can represent (3 x 8 = 24).

If you calculate 224 (i.e. the number of bit patterns you can get with 24 bits), and 256 x 256 x 256 (i.e. the number of possible colours that can be represented using the above interactive), you will find that the result of these two calculations are the same; 16,777,216. This means that there are 16,777,216 different possible colours that can be represented using this scheme, and that’s more colours than most people can distinguish, which is why 24-bit colour is regarded as high quality.

So now that we know we’ll need 24 bits to represent all the possible colours that can be made from the scheme in the interactive, how can we assign colours to bit patterns?

A sensible way is to use 3 binary numbers that represent the amount of each of red, green, and blue in the pixel. In order to do this, you can simply convert the decimal values on the interactive that specify how much of each of the primary colours is making up the resulting colour into binary, and put them side by side to make a full pattern of 24 bits. Because consistency is important in order for a computer to make sense of the bit pattern, the binary number for red should be put first, followed by green, and then finally blue.

As an example, suppose you have the colour that has red = 145, green = 50, and blue = 123 (it is a shade of purple shown in the square above; you can see it if you set the sliders to those values in the interactive above). You need to convert each of the 3 numbers into binary, using 8 bits for each. You can either do this by hand if you are confident with binary numbers, use the binary number interactive in the above section, or use a binary piano. You should get red = 10010001, green = 00110010, and blue = 01111011. This can be written as 100100010011001001111011, which is the bit pattern for representing that shade of purple. Note that there are no spaces between the 3 numbers, as this is a pattern of bits rather than actually being 3 binary numbers, and computers don’t have any such concept of a space between bit patterns anyway — everything must be a 0 or a 1. You could write it with spaces to make it easier to read, and to represent the idea that they are likely to be stored in 3 8-bit bytes, but inside the computer memory there is just a sequence of high and low voltages, so even writing 0 and 1 is an arbitrary notation. Note that all leading and trailing 0’s on each of the components are kept — without them, it would be representing a shorter number. Make sure you work through this example yourself, to understand how it works.

As long as the computer knows this is a colour (typically because it has been taken from a file that is specifying colours, such as GIF or HTML), it will know that the first 8 bits specify the amount of red, the next 8 bits specify the amount of green, and the last 8 bits specify the amount of blue. The computer won’t actually convert the number into decimal, as it works with the binary directly — most of the process that takes the bits and makes the right pixels appear is typically done by a graphics card or a printer.

24 bit colour is sometimes referred to in settings as “True Color” (because it is more accurate than the human eye can see). On Apple systems, it is called “Millions of colours”.

5.5.2. Hexadecimal colour codes

When writing HTML code, you often need to specify colours for text, backgrounds, etc. One way of doing this is to specify the colour name, for example “red”, “blue”, “purple”, or “gold”. The use of names limits the number of colours you can represent and the shade might not be exactly the one you wanted. A better way is to specify the 24 bit colour directly. The problem is that strings of 24 binary digits are hard to read, and so colours in HTML use hexadecimal codes as a quick way to write the 24 bits, for example #00FF9E. The hash sign just means that it should be interpreted as a hexadecimal representation, and since each hexadecimal digit corresponds to 4 bits, the 6 digits represent 24 bits of colour information. This “hex triplet” format is used in HTML pages to specify colours for things like the background of the page, the text, and the colour of links. It is also used in CSS, SVG, and other applications.

In the 24 bit colour example earlier, the 24 bit pattern was 100100010011001001111011. This can be broken up into groups of 4 bits: 1001 0001 0011 0010 0111 1011. Substituting a hexadecimal digit for each of the 4-bit groups (using the table above) gives 91327B. This is the hexadecimal code for this colour!

The hexadecimal notation is extremely useful for people to read or write, as it is much easier to type 6 characters rather than 24 1’s and 0’s when specifying a colour!

For example, to specify the background colour of a page in HTML, the body tag can have a hexadecimal colour added to it like this:

<body bgcolor="#00FF9E">

You can use an HTML page to experiment with hexadecimal colours.

Understanding how these hexadecimal colour codes are derived also allows you to change them slightly without having to refer back the colour table, when the colour isn’t exactly the one you want. Remember that in the 24 bit color code, the first 8 bits specify the amount of red (so this is the first 2 digits of the hexadecimal code), the next 8 bits specify the amount of green (the next 2 digits of the hexadecimal code), and the last 8 bits specify the amount of blue (the last 2 digits of the hexadecimal code). To increase the amount of any one of these colours, you can change the appropriate hexadecimal letters.

For example, #000000 has zero for red, green and blue, so setting a higher value to the middle two digits (such as #002300) will add some green to the colour. What colours will the following codes give? #FF0000, #FF00FF, #FFFFFF ? (You can try them out using an HTML file).

5.5.3. Representing colours using fewer bits

What if we were to use fewer than 24 bits to represent each colour, i.e. each slider didn’t have as many possible positions it could be in? The following interactive shows what would happen with this limitation. You can select a colour by clicking on the image on the left, and then try to match it with the 24-bit colour sliders (if it’s too difficult, the system will offer to help you; to move the sliders by small amounts, you can use the arrow keys).

It should be possible to get a perfect match using 24 bit colour. Now try the 8-bit sliders. These ones have only 8 values for red and green, and just 4 values for blue!

★コンテンツは準備中です★

The above system used 3 bits to specify the amount of red (8 possible values), 3 bits to specify the amount of green (again 8 possible values), and 2 bits to specify the amount of blue (4 possible values). This gives a total of 8 bits (hence the name), which can be used to make 256 different bit patterns, and thus can represent 256 different colours.

Using this scheme to represent all the pixels of an image takes one third of the number of bits required for 24-bit colour, but it is not as good at showing smooth changes of colours or subtle shades, because there are only 256 possible colors for each pixel. This is one of the big tradeoffs in data representation: do you allocate less space (fewer bits), or do you want higher quality?

Jargon Buster

The number of bits used to represent the colours of pixels in a particular image is sometimes referred to as its “colour depth” or “bit depth”. For example, an image or display with a colour depth of 8-bits has a choice of 256 colours for each pixel. There is more information about this in Wikipedia. Drastically reducing the bit depth of an image can make it look very strange; sometimes this is used as a special effect called “posterisation” (ie. making it look like a poster that has been printed with just a few colours).

The following interactive shows what happens to images when you use a smaller range of colours (including right down to zero bits!) You can choose an image using the menu. In which cases is the change in quality most noticeable? In which is it not? In which would you actually care about the colours in the image? In which situations is colour actually not necessary (i.e. we are fine with two colours)?

One other interesting thing to think about is whether or not we’d want more than 24 bit colour. It turns out that the human eye can only differentiate around 10 million colours, so the 16 million provided by 24 bit colour is already beyond what our eyes can distinguish. However, if the image were to be processed by some software that enhances the contrast, it may turn out that 24-bit colour isn’t sufficient. Choosing the representation isn’t simple!

Note: The interactive here has not been updated to the new system. Please view the interactives on the Data Representation page on the older version of the CSFG. We are currently in the process of updating these interactives for release.

So is it worth the space saving to put up with a lower quality image? An image represented using 24 bit colour would have 24 bits per pixel. In 600 x 800 pixel image (which is a reasonable size for a photo), this would contain 600 x 800 = 480,000 pixels ,and thus would use 480,000 x 24 bits = 11,520,000 bits. This works out to around 1.44 megabytes. If we use 8-bit colour instead, it will use a third of the memory, so it would save nearly a megabyte of storage.

8 bit colour is not used much anymore, although it can still be helpful in situations such as accessing a computer desktop remotely on a slow internet connection, as the image of the desktop can instead be sent using 8 bit colour instead of 24 bit colour. Even though this may cause the desktop to appear a bit strangely, it doesn’t stop you from getting whatever it was you needed to get done, done. There are also other situations where colour doesn’t matter at all, for example diagrams, and black and white printed images.

If space really is an issue, then this crude method of reducing the range of colours isn’t usually used; instead, compression methods such as JPEG, GIF and PNG are used. These make much more clever compromises to reduce the space that an image takes, without making it look so bad, including choosing a better palette of colours to use rather than just using the simple representation discussed above. However, compression methods require a lot more processing, and images need to be decoded to the representations discussed in this chapter before they can be displayed. We will look at compression methods in a later chapter. The ideas in this present chapter more commonly come up when designing systems (such as graphics interfaces) and working with high-quality images (such as RAW photographs), and typically the goal is to choose the best representation possible without wasting too much space.

For the purposes of the NCEA standards, reducing the bit depth of an image is ok as a second compression method to compare to specialised compression methods (JPEG, PNG, GIF etc.), but isn’t very suitable for explaining how compression works (in the Achieved level requirements).

Now that you know how the 24 bit and 8 bit colour schemes work and how to represent them using bits, what are the implications of this in practice? The following interactive can be used to upload your own image, and experiment with allocating different numbers of bits to each colour. You can use it to demonstrate the effect of the different numbers of bits for this data representation.

5.6. General representations of text

In the introduction we looked at 8-bit ASCII representations of text (which really use 7 bits, allowing for 128 different symbols). As with any other kind of data represented in binary, we can get improvements by considering larger (or smaller) representations.

In the curiosity section earlier we observed that 5 bits are sufficient for simple coding of the English alphabet, and for very slow coding systems (like the video that contains hidden text using musical notes

) using 5 bits instead of 8 can save some time. The braille system uses only 6 bits for each character, which allows for 64 different characters, and it is also better than using 8 bits since it would take more paper and more time to read if the longer code was used.

But some languages have way more than 32, or 64, or even 128 characters in their alphabet. In fact, the majority of the world’s population use such languages! In this case, longer codes are needed, and the most widely used approach is a system called Unicode. A commonly used version of Unicode allows 16 bits per character. Because every extra bit that is added doubles the number of patterns possible, 16-bit codes have many more representations than 8 bit codes. In fact, with 16 bits there are 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 x 2 = 216 = 65,536 patterns that can be represented. This is enough to assign a unique pattern of bits to the main characters in the most common languages, although there are also standards that allow 32 bits (4 bytes) for each character.

The Unicode table is far too big to display in this book, but you can find a variety of tables on the internet, and use them to look up codes. This website displays all unicode characters with geographical data for appropriate characters. The 16- and 32-bit codes are usually written using hexadecimal since this is an easy abbreviation for the long binary codes, and sections of the Unicode alphabet (different languages) tend to be in multiples of 16.

The modern codes associated with Unicode are usually flexible in the size of the representation, so 8-bit characters can be used if that is sufficient, but 16- or 32- bit characters can be invoked for larger alphabets. If you are investigating these codes, you will come across standards such as the Universal Character Set (UCS), the Unicode/UCS Transformation Format (UTF-8 UTF-16, etc.), and the GB 18030 standard (which was mandated in the People’s Republic of China from the year 2000).

5.7. Computers representing numbers in practice

A common place that numbers are stored on computers is in spreadsheets or databases. Some of the things that we might think of as numbers, such as the telephone number (03) 555-1234, aren’t actually stored as numbers, as they contain important characters (like dashes and spaces) as well as the leading 0 which would be lost if it was stored as a number (the above number would come out as 35551234, which isn’t quite right). On the other hand, things that don’t look like a number (such as “30 January 2014”) are often stored using a value that is converted to a format that is meaningful to the reader (try typing two dates into Excel, and then subtract one from the other — the result is a useful number). Numbers are commonly used to store things as diverse as student marks, prices, statistics, and scientific readings.

Any system that stores numbers needs to make a compromise between the number of bits allocated to store the number, and the range of values that can be stored. For example, Excel spreadsheets have a maximum value that can be stored — try calculating 1/3, and display it to as many places of accuracy as possible. In some systems (like the Java and C programming languages and databases) it’s possible to specify how accurately numbers should be stored; in others it is fixed in advance (such as in spreadsheets). Some are able to work with arbitrarily large numbers by increasing the space used to store them as necessary (e.g. integers in the Python programming language).

There are two commonly used kinds of numbers: integers and floating point numbers. Integers are what you might know as whole numbers, and can be positive or negative, whereas floating point numbers can have a decimal point in them, and can also be positive or negative. In this section we are just going to focus on integers, as representing floating point numbers is a bit more difficult to understand (but well worth understanding if you use them a lot)!

The binary number representation in the previous section only allowed us to represent positive numbers. In practice, we will want to be able to represent negative numbers as well (such as when the amount of money earned goes to a negative amount, or the temperature falls below zero!) In our normal representation of base 10 numbers, we represent negative numbers by putting a minus sign in front of the number. On a computer we don’t have minus signs, but we can do it by allocating one extra bit, called a sign bit, to represent the minus sign. We can choose the leftmost bit as the sign bit — when the sign bit is set to “0”, that means the number is positive and when the sign bit is set to “1”, the number is negative (just as if there were a minus sign in front of it). For example, if we wanted to represent the number 41 using 6 bits (like above) along with an additional 7th bit that is the sign bit, assuming the sign bit is first, we would represent it by 0101001. The first bit is a 0, meaning the number is positive, then the remaining 6 bits give 41, meaning the number is +41. If we wanted to make -59, this would be 1111011. The first bit is a 1, meaning the number is negative, and then the remaining 6 bits give 59, meaning the number is -59.

Using 7 bits as described above (one for the sign, and 6 for the actual number), what would be the binary representations for 1, -1, -8, 34, -37, -88, and 102?

Suppose we have 8-bit numbers, with the left-most bit as a sign bit. What would the decimal values be for the following 10000110? 01111111? How about 10000000?

The representation 10000000 highlights a problem with this notation, as it represents the number -0, which is the same as 0. That is, there are two ways to represent the number 0, which is wasteful, and potentially confusing.

It turns out that there’s a notation called “two’s complement” for negative numbers, which avoids this wastage, and more importantly, makes it easier to do arithmetic with negative numbers. It’s beyond what is needed for this topic, but the following box gives some more information if you’d like to look into it.

Extra for Experts

Negative numbers are more often stored on computers using a system called “two’s complement”. This system makes it very easy to do arithmetic without having to treat negative numbers as a special case, so it’s faster and uses less circuitry. The principle is based on a fairly simple idea: for example, in decimal, if you had to subtract the number 4 from a value, it’s the same if you add 6 and subtract 10. Using the complement of the number -4 (i.e. 6) plus an indicator that it’s negative can make calculations quicker and simpler. A similar approach applies in binary, and it’s even easier because there are only two digits. More information is available here on how negative numbers work, and also on the Wikipedia page about two’s complement, although it’s quite technical.

Curiosity

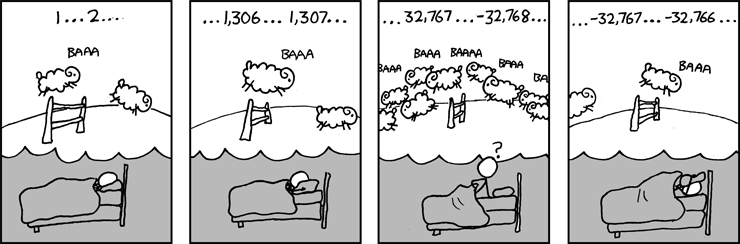

In some programming languages there isn’t a check for when a number gets too big (overflows). For example, if you have an 8-bit number using two’s complement, then 01111111 is the largest number (127), and if you add one without checking, it will change to 10000000, which happens to be the number -128. This can cause serious problems if not checked for, and is behind a variant of the Y2K problem, called the Year 2038 problem, involving a 32-bit number overflowing for dates on Tuesday, 19 January 2038.

Because of the way computer memory is constructed, memory is most commonly used in chunks of 8 bits or 32 bits (or even 64 bits) at a time. That means that if the computer is representing an integer as a binary number with a sign bit, it will commonly use 32 bits, where the first bit is the sign bit, and the other 31 bits represent the value of the number.

In a computer that uses 32 bits for a number, how many different numbers could it represent? What’s the largest number it could represent? Remember that every bit you add doubles how many numbers you can make. If you double 64 another 25 times (so that it is up to 31 bits), i.e. 128, 256, 512, 1024, 2048.... with an end result of 2,147,483,648. This means that there 2,147,483,648 numbers that can be represented with 31 bits, the highest of which is 2,147,483,647. This number is just over 2 billion. With the 32nd bit, the sign bit, this means that the number can be positive or negative. This is called a signed 32 bit integer. So with the signed 32 bit integer, you can represent any number between -2,147,483,647 and +2,147,483,647.

There is also such thing as a 32 bit *unsigned* integer. This does not have a signed bit, and the 32nd bit is included as part of the value. As a result, it can represent twice as many positive numbers (but no negative numbers) as the 32 bit signed integer above. This would be 4,294,967,296 different numbers, with 4,294,967,295 being the highest.

How many people are in the world? Would a 32 bit integer like described above be large enough to store a different identifier number for each person in the world? How many bits of accuracy would you want to allow for possible population growth?

| Type of Number | Unsigned Range | Signed Range |

|---|---|---|

| 8 bit signed | 0 to 255 | -128 to 127 |

| 16 bit signed | 0 to 65,535 | -32,768 to 32,767 |

| 32 bit signed | 0 to 4,294,967,295 | −2,147,483,648 to 2,147,483,647 |

| 64 bit signed | 0 to 18,446,744,073,709,551,615 | −9,223,372,036,854,775,808 to 9,223,372,036,854,775,807 |

So when you are storing values on a computer with very limited space, you need to be careful to pick a suitable kind of integer that has enough space, but isn’t wasting space. You also need to think about whether or not a number could potentially be negative.

Think of a few different examples for different sized integers (both signed and unsigned ones) of a piece of data that you could store in that sized integer. For example, the age of a person could be stored in an 8 bit unsigned integer (people can’t be a negative age!), and the number of students in your school could be stored in an 8 bit or 16 bit integer, depending on how big your school is! What other examples can you think of?

What are some examples of numbers you could not represent using any of these integers?

To compare two types of integer representation for the 2.44 standard, you could compare the potential use of signed and unsigned numbers to store values for some application you have in mind, and discuss now many bytes are needed, and the waste or overflows that could occur if you make the wrong choices.

Extra for Experts

Another type of number used in computer systems is the “floating point” value. While we won’t look at it in detail, to get a taste of what’s involved, consider the bit values in a 4-bit number, which are 8, 4, 2 and 1. What would the value of a bit to the right of the one bit be? And to the right of that one?

The following version of the base conversion interactive has bits that are smaller than the 1-bit. Try representing the decimal number 3.5 using this system. How about 2.8125? What about 2.8?

This system is a fixed-point number system; floating point numbers are based on this idea, but allow for the number of digits to be fixed, but the position of the point to change (by giving an exponent value).

5.7.1. Numbers in programming languages

If you are programming in a language (e.g. Python, Java, C, C++, C#) then the limitations of data representations become important very quickly, as you will have to choose what kind of data representation you want to use, and if it is too small then it can “overflow”. For example, if you allocate a variable to be stored as a 16 bit unsigned integer, and you are counting how many characters there are in a file, then it will fail after 65,535 characters — that’s just a 65 kilobyte file.

If the amount of memory your computer has to store its data in is very limited (for example, on a small portable device), you might not want to reserve 32 bits for a number if it is never going to be over 100. Or even if there is plenty of memory, if you are storing millions of data values then using 16-bit integers instead of 8-bit integers will waste millions of bytes of memory.

Working out the size of an integer used in a particular programming language may take some investigation, as they are usually declared with names like “int” and “long”, which don’t say explicitly how many bits they use. For example, in the Java programming language, there is a data type called the “byte”, which is an 8-bit integer that includes negative numbers (it goes from -128 to 127), whereas a “short” integer is 16 bits, an “int” is 32 bits, and a “long” is 64 bits. In some cases (such as the “int” type in C) the length of an integer depends on the version of the language of the type of computer it is running on, and in other cases (such as integers in Python) the representation is automatically changed for you if the number gets too big!

A good project for the 2.44 standard is to investigate the size allocated to integers in a programming language that you are using. You could allocate a variable to a type of integer, and then add values to it that take it out of range, demonstrating where the boundary is for that type of variable.

5.9. The whole story!

5.8 The Whole Story!

The kind of image representations covered here are the basic ones used in most digital systems, and the main point of this chapter is to understand how digital representations work, and the compromises needed between the number of bits, storage used, and quality.

The colour representation discussed is what is often referred to as “raw” or “bitmap” (bmp) representation. For large images, real systems use compression methods such as JPEG, GIF or PNG to reduce the space needed to store an image, but at the point where an image is being captured or displayed it is inevitably represented using the raw bits as described in this chapter, and the basic choices for capturing and displaying images will affect the quality and cost of a device. Compression is regarded as a form of encoding, and is covered in a later chapter.

The representation of numbers is a whole area of study in itself. The choice of representation affects how quickly arithmetic can be done on the numbers, how accurate the results are, and how much memory or disk space is used up storing the data. Even integers have issues like the order in which a large number is broken up across multiple bytes. Floating point numbers generally follow common standards (the IEEE 754 standard is the most common one) to make it easy to design compatible hardware to process them. Spreadsheets usually store numbers using a floating point format, which limits the precision of calculations (typically about 64 bits are used for each number). There are many experiments that can be done (such as calculating 1/3, or adding a very large number to a very small one) that demonstrate the limitations of floating point representations.

The chapter does not (yet) cover other forms of data representation, and you may wish to explore these as alternatives. The common ones are:

- sound (wave files and related storage; for example, 16-bit samples are used for “CD quality”, but professional systems use 24-bit or even higher) — for some information, see the Teach with ICT page on sound representation.

- video (which are based on multiple images being played one after the other; however, these files are so large that they are almost never stored as a “raw” representation)

5.9. Further reading

This puzzle can be solved using the pattern in binary numbers: http://www.cs4fn.org/binary/lock/

This site has more complex activities with binary numbers, including fractions, multiplication and division.

5.9.1. Useful Links

- Basics of binary numbers

- Representing bits using sound

- Hex game

- Thriving in our digital world has good illustrations of data representation